5 Effective Strategies for A/B Testing Ad Creatives

Applying an A/B testing framework is perhaps one of the most frequently discussed items by marketing teams internally. With a seemingly infinite number of combinations of ad copy, creative, and landing pages in the mix, it becomes critical to have a disciplined approach to testing creative using the A/B testing methodology. Here are a few things I learned having tested creatives on paid search, display, app push, and several other channels at scale.

Establish a culture of experimentation

It is important to encourage teams to take a hypothesis driven approach to making changes. Marketers, by nature, have a DNA of continuous improvement by trying new ideas. Having a company-wide culture which encourages hypothesis testing and continuous learning from both successful and unsuccessful tests becomes important to truly leveraging A/B testing to make meaningful business decisions.

Invest early in an underlying experimentation platform

For any given company which operates at scale, there might be a desire to run several dozen (to even several hundred) tests at any given time. Investing in an experimentation platform will not only allow for multiple test to run simultaneously in an orthogonal fashion, it will also allow for complete and trustworthy test results.

Learn more about Kartick from his Mobile Heroes profile.

Use the right tools

Running a full-funnel analysis is insightful but expensive. Using tools such as Druid for data ingestion, data exploration, and aggregation along with Superset or Pivot UI allows for quick filtering and splitting of the data which enables marketers to invest their time on iterating on tests.

Rough size the hypothesis

It is also good practice to approximate the hypothesis which is to be tested before deploying the actual test. This is because even the most advanced testing platform requires some amount of upfront investment to set up the actual test. Rough sizing the lift will allow teams to be smart about resource allocation.

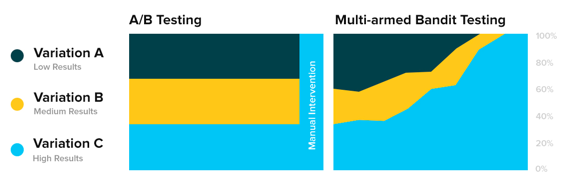

Use techniques like MAB to reduce waste

For tests that require speed and automation, one option to consider is the Multi-armed Bandit (MAB) approach where traffic is directed to the better option even during the test period. The Multi-armed Bandit approach works particularly well when testing promotional banners with a limited time window when compared to a traditional A/B approach. This is because if significance is not reached by the end of the promotional period, the incoming results would not be considered meaningful.

Variation Allocation Over Time – A/B vs. Multi-Armed Bandit