How Automation Solves UA’s Biggest Problems on iOS Today

“Welcome back to 2010.”

That’s what a leading marketer said in an industry Slack group discussion about the challenges posed by SKAdNetwork, the app attribution solution provided by Apple for iOS post-privacy changes.

It’s a scary thought.

Over the past 10 years, mobile marketing has evolved into a sophisticated behemoth of an industry, all centered around the IDFA, a unique device ID that has been leveraged by advertising technology companies to build individual user profiles and predict how each user will behave in the future to serve highly targeted advertising campaigns.

Although the 2010s were a fun time in mobile marketing, we don’t have to take a trip back in the time machine. Automated technologies already exist to solve for the effective deprecation of IDFA. So we thought it imperative to outline in this article which problems need to be solved to ensure marketers can continue to do their job, and share solutions that exist in the market today.

Background

Apple’s AppTrackingTransparency (ATT) framework completely changes how user acquisition is measured, reported, and optimized on iOS. Consequently, user acquisition teams today must morph into full-stack marketing teams. They’ll lack the laser-precise user targeting capabilities of leading ad networks at their disposal and UA teams will now have to accept imprecise reporting as a result of fewer users accepting ad tracking.

Naturally, this should lead mobile marketers to think about larger cohorts and accept less certainty than they’re used to, just as offline marketers do today. Those who embrace change will win in this next chapter of mobile.

Before we delve into the details here, it’s important to note that marketing teams are crucial to the success of a technology-driven, automated system. Today, automation is used as a scapegoat for people displaced from the workforce more generally. In its defense, the application of automation is limited to the less interesting, less valuable work that people do.

We can take the example of Facebook and Google who seemed to automate much of what was known as user acquisition pre-2015. Although their algorithms finally solved the eternal challenge for marketers to target the right person with the right message at the right time, they didn’t offer such solutions for creative building/testing capabilities, automated campaign creation, or cross-campaign budget allocation. Humans possess strategic thinking, context, and creativity that automated machines will likely never have.

With that, here are seven different ways automation can help mobile marketers solve UA’s biggest problems today.

1. Defining ConversionValue for maximum ad network optimization

Manual approach

Humans can apply a heuristic approach to determining the optimal ConversionValue by estimating early in-app events that may be predictive of LTV. For a mobile game, this may be an early purchase in a game. For a subscription-based app, it may be a user starting a free trial. However, without a sophisticated data science team, most companies will be limited in their understanding of this problem.

The optimal ConversionValue for a specific app is constrained by:

- How Apple allows it to be defined (a 6-bit value)

- The ad networks’ requirements to receive the ConversionValue within a reasonable amount of time for them to collect feedback on the down-funnel performance of the campaign. Today, this is within 24 hours.

It’s crucial to achieve these two objectives whilst calculating the optimal definition of ConversionValue:

- An in-app event (or string of events) within the first 24 hours that correlates to LTV for ad networks to optimize toward

- The optimal event to be leveraged for probabilistic attribution to be able to rebuild campaign ROAS reporting

Most mobile marketers are only currently focused on the first objective — to be able to leverage SKAdNetwork to continue to optimize their campaigns toward LTV. However, this approach has its limitations. Many ad networks are limiting the ConversionValue reporting window to 24 hours and once an advertiser has sent the ConversionValue to the ad network, they’re unable to learn anything more about a given user.

This means the advertiser:

- Can only optimize campaigns toward D0 ROAS or other D0 KPIs

- Can’t update campaign ROAS based on updated cohort data, i.e. understand how a campaign’s actual and predicted ROAS (pROAS) matures over time

Automated approach

To optimally define ConversionValue, an algorithm can both:

- Determine the mapping of ConversionValue to LTV (e.g. D0 KPI to D365 LTV)

- Calculate the best “clustering” of ConversionValues for probabilistic attribution. We’ll discuss what we mean by “probabilistic attribution” in the next section.

An algorithm can do this by predicting the app feature importance of which D0 in-app events (e.g. purchase, tutorial finish, number of meditations) are predictive of the long-term LTV target for the app (e.g. D180/D365 LTV). A clustering algorithm can then calculate which of these are optimal for probabilistic attribution.

2. Probabilistically attributing revenue to campaigns and channels

Manual approach

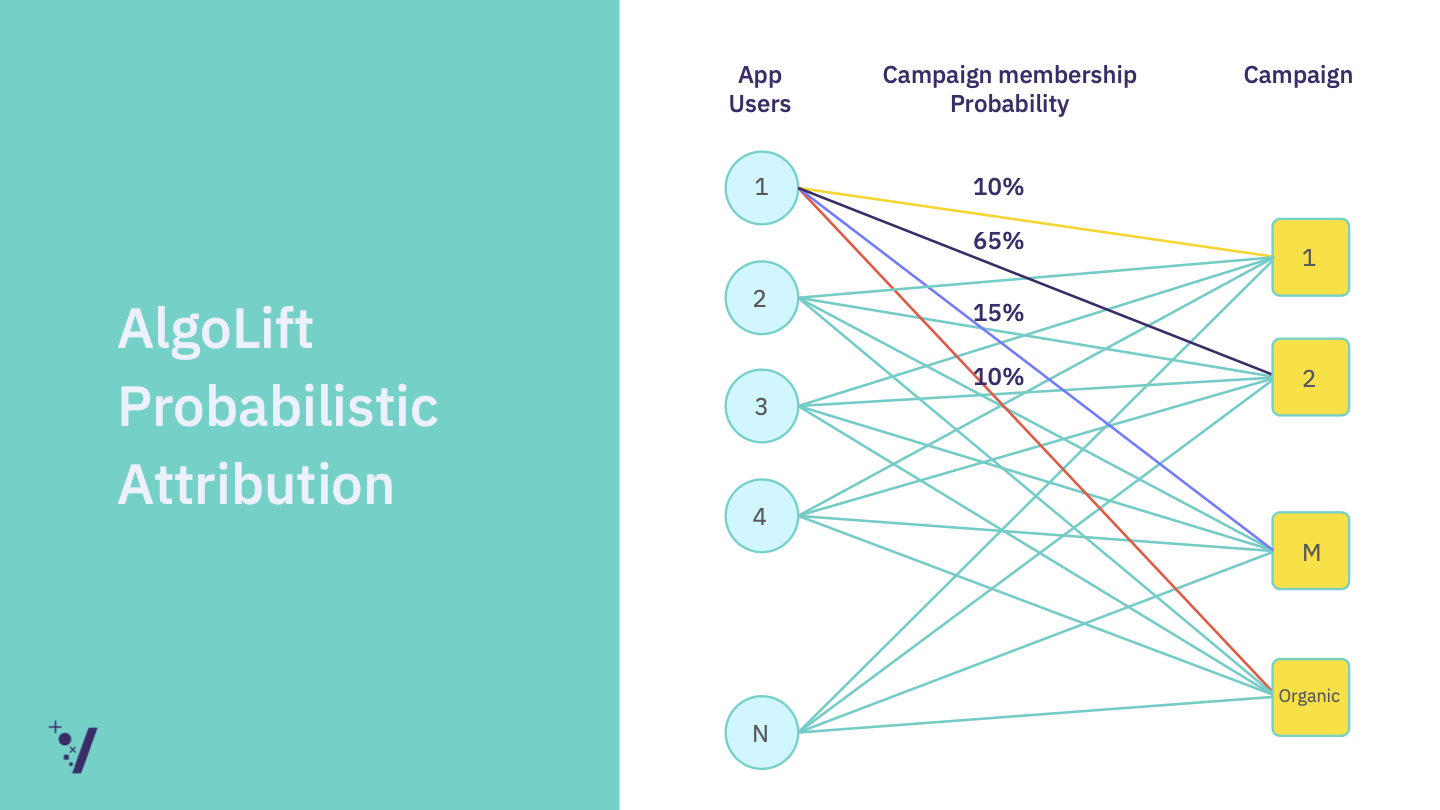

Probabilistic attribution is a system of creating a range of probabilities to attribute revenue from acquired users to their likely campaigns. As discussed above, if a marketer uses only SKAdNetwork to measure the performance of campaigns, they’re unable to understand how the ROAS of an acquired cohort (e.g. campaign) matures over time. A marketer attempting to build probabilistic attribution is unrealistic given the size of the data sets necessary.

Also Read: Probabilistic Attribution Does Not Equal Fingerprinting — Here’s How

Automated approach

Automation can solve this by creating a probability distribution for the most likely campaign that a user was driven from using a simple mathematical approach. Although this approach is simple, calculating these probabilities over thousands of installs is computationally taxing.

The machine can then assign campaign membership probabilities to every campaign based on SKAdNetwork data and anonymous user-level app data. It’s then able to update the actual revenue from every user within a campaign based on updated in-app user data — all tracked against a user ID such as a hashed IDFV (identifier for vendors, an analytics ID expressly provided by Apple for the use case of tracking in-app user behavior). We can do this by tracking every user as they spend more within the app and then update the campaign revenue based on the percentage of revenue driven by that campaign. This then gives a complete view of the historical performance of a campaign or channel.

3. Predicting LTV to understand the long-term returns of your campaigns and channels

Manual approach

With SKAdNetwork, marketers are limited to a D0 performance view of their campaigns and channels due to the limitations of reporting. UA teams also have no visibility of the long-term returns of their campaigns (e.g. D180/D365).

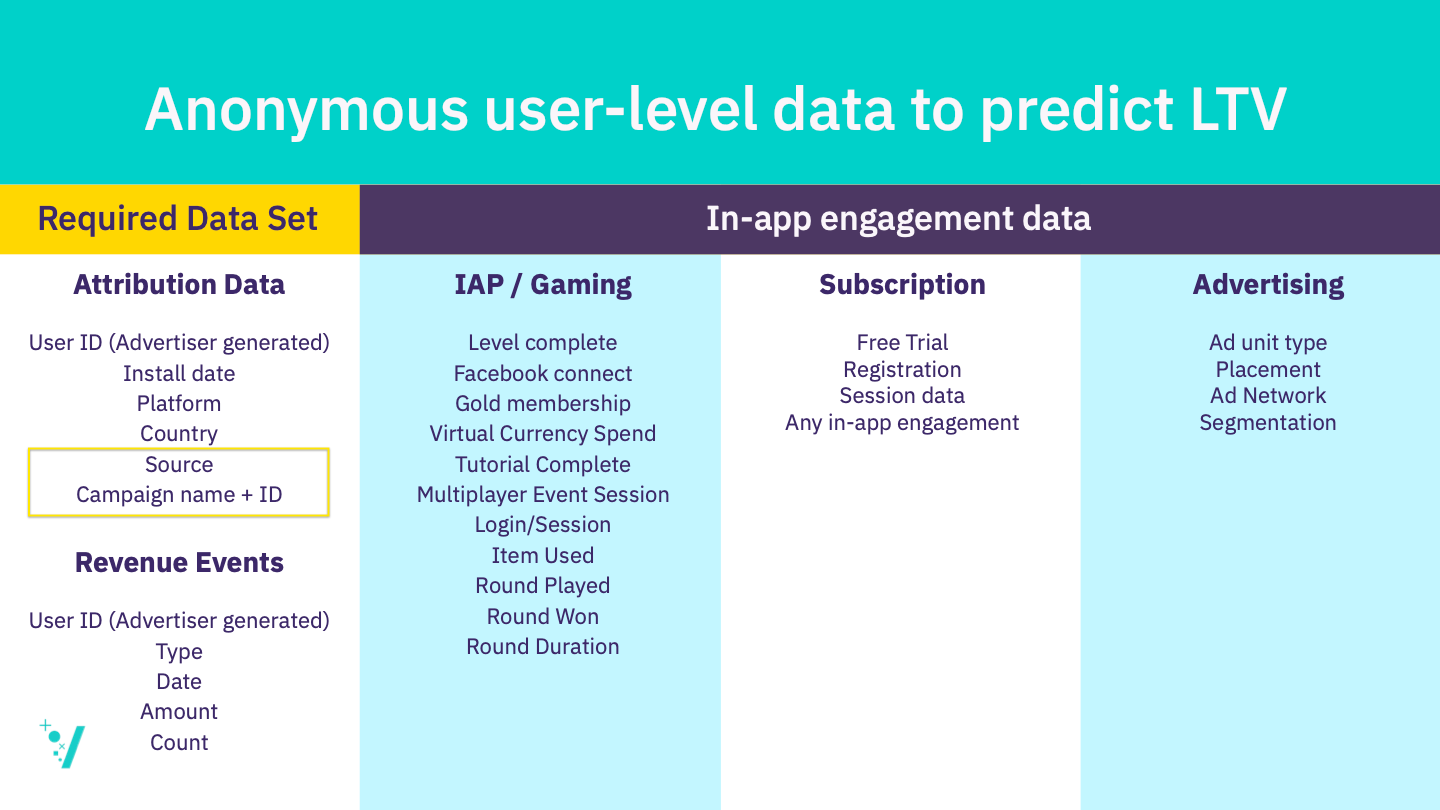

Below are the data sets most companies use to predict LTV. Today, most analytics teams limit their LTV predictions to the cohort level using a D7 ROAS model. This approach doesn’t require complex algorithms to implement and can be calculated in a spreadsheet. However, cohort LTV models are effectively defunct on iOS as key dimensions to predict LTV are missing, namely the source name, campaign name, and campaign ID.

Attribution and in-app user-level data for predicting user-level LTV

Attribution and in-app user-level data for predicting user-level LTV

Automated approach

An algorithmically-driven system doesn’t need source or campaign ID to predict LTV. The most important features to predict LTV for an algorithm are revenue events and in-app engagement data (especially important for predicting conversion rate).

An algorithm can predict user-level LTV without knowing the source or campaign ID. An algorithm can also leverage the anonymous user ID to make a user-level LTV prediction and continue to update the long-term predicted LTV based on the most recent behavioral information (in-app revenue and engagement data). It can then use these LTV predictions to predict the future returns of campaigns.

4. Reporting long-term campaign ROAS for campaign optimization

Manual approach

A marketer using SKAdNetwork campaign attribution and optimization alone is limited to D0 KPIs for campaign performance measurement and therefore will use this short-term metric to optimize campaigns. This means the budget distribution of their campaigns will be completely suboptimal relative to their long-term campaign targets because D0 ROAS is not 100% correlated (or even close) with LTV.

Automated approach

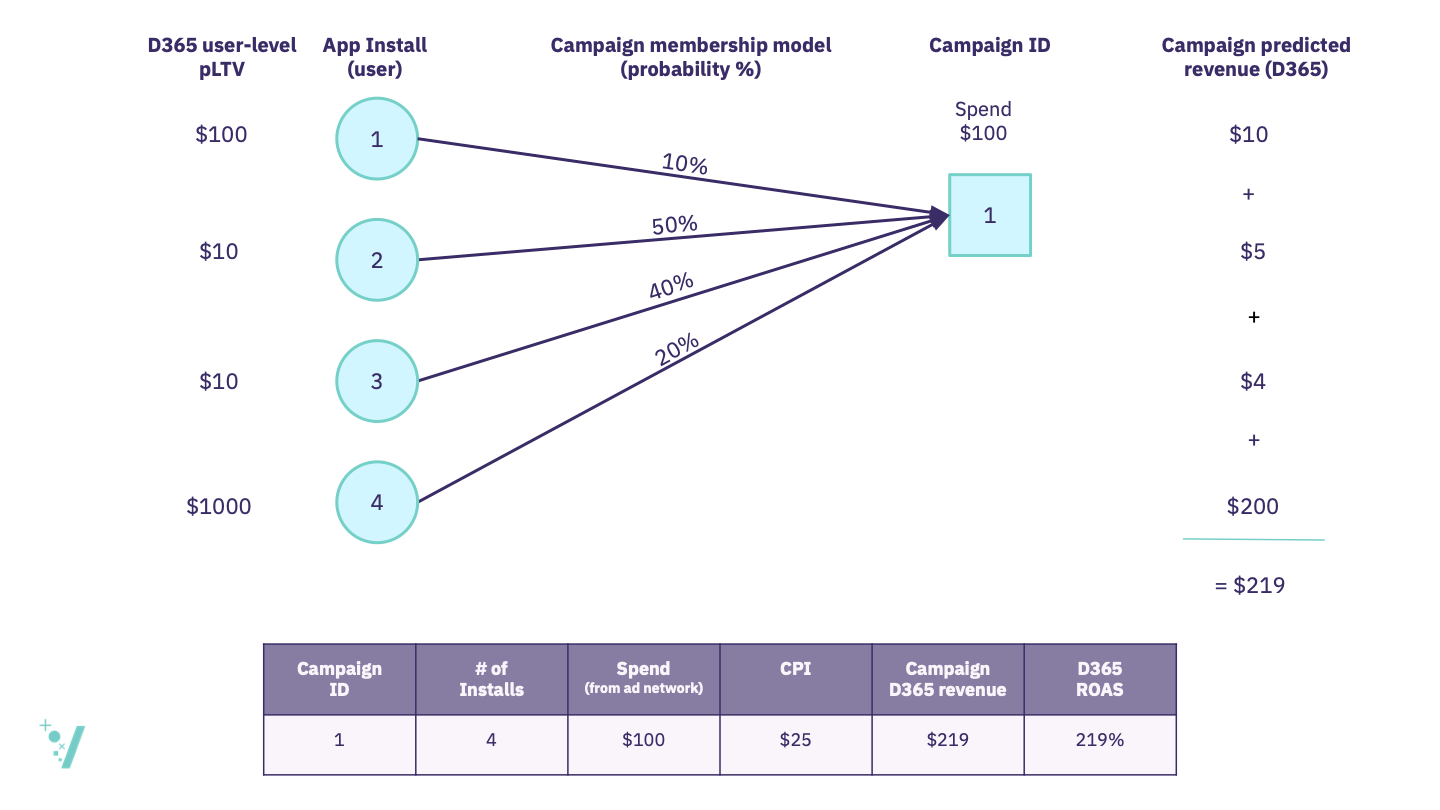

An algorithmic statistical model can automatically calculate a probability distribution for every app install to belong to every campaign. But that doesn’t solve for the predicted revenue from that campaign. Now that we have the actual revenue driven by a campaign (through probabilistic attribution) and the underlying user behavior (from the in-app user-level data), we can use these two data sets to predict future campaign revenue. The mathematics at this point is simple, but the probability distribution and the user-level LTV projections are complex to calculate over thousands or millions of installs and completely out of reach for a human.

A simple math equation can allocate a revenue contribution for each install to each campaign and the organic channel. Below is an example of a mathematical model that multiplies the user-level LTV forecast by the campaign membership probability to calculate a campaign-predicted revenue contribution for each install for each campaign. Once the model sums predicted revenue for all installs that have a probability of greater than 0%, it outputs a campaign predicted revenue. Accounting for spend from the ad network for Campaign 1 ($100) gives us a campaign pROAS.

An algorithm can then output a campaign (and channel)-level predicted ROAS for all SKAdNetwork campaigns. This can then be used for manual campaign optimization or can be a data input for an automated bid and budget optimization system.

5. Making sense of the Apple privacy threshold for effective campaign performance optimization

Manual approach

Apple has implemented a privacy threshold that limits how much information is shared through the SKAdNetwork API, limiting reporting to ConversionValue and the source app ID when volumes are below a certain, and unknown, threshold.

For UA teams, solving this problem is debilitating. How does a UA manager interpret the performance of a campaign or channel when there are potential missing data? Would they just use tracked data? Would they guess what the missing data might be? There is no reasonable way for a UA manager to leverage this data except just to use the tracked ConversionValues.

Automated approach

For a bid and budget UA automation platform, this is a typical multi-armed bandit explore-exploit problem that can be encoded into algorithms. An automated platform can model the pROAS of a campaign and weigh how much more budget to give to a specific campaign to breach the threshold set by Apple.

Once a specific source app ID reports ConversionValue, the algorithm can then exploit that specific source app ID based on its predicted returns by leveraging ConversionValue and campaign, and geo prior probabilities via probabilistic attribution. Probabilistic attribution will account for missing ConversionValues by modeling the uncertainty in the unknown ConversionValues and updating attribution probabilities accordingly.

6. Effective cross-channel budgeting for optimal spend portfolio management

Manual approach

Historically, UA managers and finance teams have used a finger-in-the-air approach to cross-channel budgeting. Leveraging user-level attribution from their MMPs and their cohort LTV model, they were able to roughly predict their ad network ROAS relatively easily and determine via a rough estimate how much budget to allocate to a high-performing channel.

Without user-level attribution for users who don’t share their IDFA, it’s far more challenging to assess the appropriate channel-level monthly budget. The most likely method to manually allocate channel budgets would be to distribute based on the total number of ConversionValues tracked at the ad network level and spread spend proportionally based on the total budget. This, however, would be allocating monthly budgets on D0 performance — a completely short-term view of budgeting.

Automated approach

An automated system can leverage long-term probabilistically attributed campaign ROAS reporting to determine the optimal distribution of spend every month to achieve the long-term goal of the portfolio of ad networks using historical ad network spend data and predicted returns from previous months. An algorithmic model can determine how much more spend to allocate to a high-performing ad network before it sees diminishing returns.

7. Measuring organic installs with SKAdNetwork to measure incrementality

Manual approach

Marketers have no feasible way to understand which users in a mobile app came from organic sources but organic install performance measurement is important for two key reasons:

- For product teams to understand how the product is performing for organic users relative to users acquired through paid channels

- Incrementality, meaning the impact of a paid UA channel on both organics and other channels, is crucial in determining where cross-channel budgets should be allocated. Understanding cross-channel cannibalization is key to ensure we understand the optimal media mix of channels to maximize value for the advertiser.

Automated approach

SKAdNetwork doesn’t report organics (it’s limited to paid advertising), so marketers must use an algorithm to probabilistically attribute paid installs driven by an advertising campaign. Doing so will allow marketers to understand which installs are organic because they’ve installed the app but not accounted for in the SKAdNetwork data. Only once a full accounting of the in-app user-level data and the SKAdNetwork data is complete can marketers begin to understand which users might be truly organic and which are paid.

Campaign creation, audience creation, and creative testing and deployment

We’ve outlined seven ways that automation can improve manual tasks for iOS. However, there are 3 key tasks within the UA roles and responsibilities today that most likely are better solved by people rather than machines.

- Campaign creation: With a 100 limit campaign cap on SKAdNetwork campaigns, campaign setup is key as it significantly constrains how audiences and geos are targeted. However, it’s unclear exactly what the best campaign setup is, and experimentation is required before any form of automation can be applied to the use case.

- Audience creation: The largest ad networks build algorithms that find audiences for advertisers’ apps using their huge datasets. Going forward, audience creation now falls back to the performance marketers as these networks will be unable to build internal “lookalike” tools to better target ads. It’s unclear what tools the networks will make available to target those audiences in the future and so seeding with high-value audiences may be necessary going forward.

- Creative testing and deployment: Optimal creative testing and deployment is one of UA’s toughest challenges. The largest ad networks decide which creative to serve to a user in an automated way so the problem we need to solve is how to give the advertiser the optimal combination of creatives. SKAdNetwork completely removes the ability to match an individual creative’s performance with downstream metrics (i.e. installs, revenue events). Few of the largest ad networks have shared how they’ll optimize and allow for reporting in an ATT-driven world.

Humans have strategic thinking, context, and creativity that machines will likely never have. It’s understandable that most mobile marketers feel like they’re about to take a trip back to 2010 when they look at the tools they’re currently using today — tools that were built for a pre-ATT world. However, most of the technical challenges posed by Apple’s privacy changes have already been solved.

Learn more about AlgoLift by Vungle’s measurement solutions that solve for Apple’s privacy changes by contacting us below.